Most People Use AI… But Don’t Really Understand What They’re Using

Over the past two years, AI has become everywhere.

From ChatGPT, Cursor to countless AI Agents that can write code, generate content, and even automate workflows.

On the surface, it feels like:

AI is becoming a universal skill - something anyone can use.

But if you look a bit deeper, there’s an uncomfortable truth:

Most people using AI… don’t really understand what they’re using.

AI Is Being Packaged Too Well

In the past, when you used software:

you knew whether you were using Excel or Photoshop

you understood what each tool was good at

Now:

AI Agents sit in the middle

models are hidden behind the scenes

everything is wrapped into a smooth experience

You only see:

prompt → output

You don’t see:

which model is running

how deep the reasoning is

the real cost behind each request

This leads to a key consequence:

AI becomes a convenient black box instead of a controllable tool.

3 Types of AI Users (And Where Are You?)

1. Casual Users - “As long as it works”

They don’t care about:

which model

why it’s right or wrong

reliability

They only ask:

“Does it get the job done?”

For them:

AI = a smarter version of Google

2. Experienced Users - “They feel the difference”

They start noticing:

this model writes better code

that one writes better content

some are fast but make weird mistakes

But the problem:

they don’t know why

they can’t optimize when to use which

=> This is the largest group today.

3. Power Users - “They treat AI as a resource”

They don’t see AI as a tool.

They see it as:

CPU

RAM

or cloud resources

They understand:

which tasks need strong models

which only need lightweight ones

trade-offs between cost - speed - quality

Examples:

Simple CRUD → lightweight model

Debugging race conditions → strong model

SEO content → another model

For them:

AI is not an assistant - it’s leverage

The Core Problem: AI Agents Hide the Differences

AI Agents are convenient.

But they come with a side effect:

They make all models look the same.

You no longer see:

why an output is good

why an output fails

which model is actually worth the cost

You only see:

“This works / This doesn’t”

Why This Matters

Because once AI becomes “baseline”:

Average users → average output

System thinkers → superior output

The difference is no longer:

whether you use AI or not

It becomes:

how well you understand it

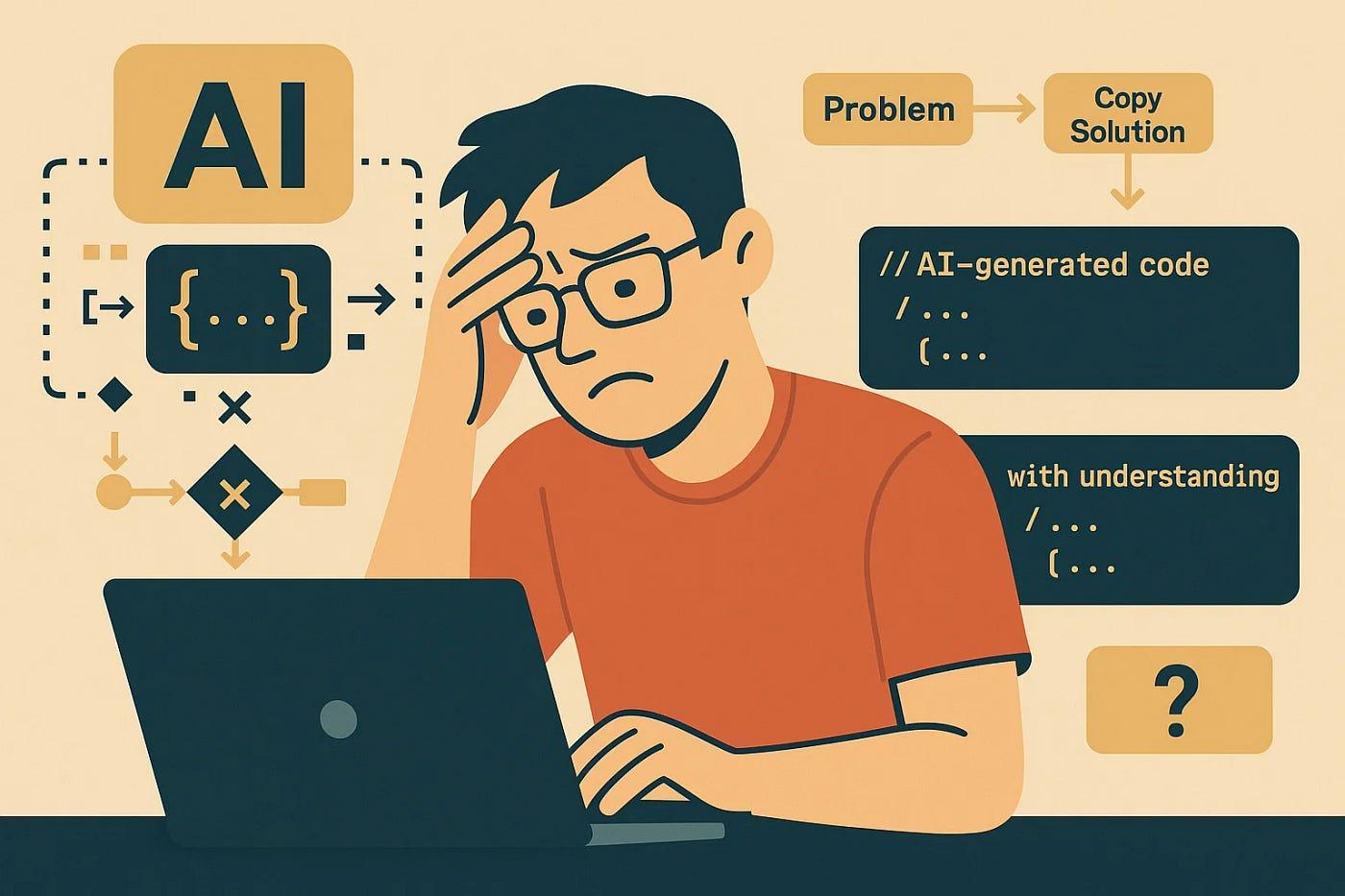

An Uncomfortable Truth (But It Needs To Be Said)

Right now, many people:

- think they are “good at AI”

- but are actually just “good at prompting”

That’s not wrong - but it’s not enough.

Because:

- Prompting is just the interface, not the engine

If you don’t understand:

- when models fail

- why hallucination happens

- how to break tasks down

You will eventually hit a ceiling.

So What Should You Do?

If you are a developer or building products:

Don’t just:

use AI to save time

Instead:

treat it as a system

understand different task types

match the right model to the right job

A simple way to think about it:

Repetitive, clear tasks → lightweight models

Deep reasoning tasks → strong models

Consistency-critical tasks → control context

Conclusion

AI does not make everyone equal.

In fact:

AI increases the gap between people who understand systems and those who only use tools.

The real question is:

Do you want to stop at “using AI”…

or build a real advantage from it?